I really, really, really wanna go, but i can t. The full preprocessing code can be found at the Prepare Dataset section of the colab notebook. Teacher forcing is passing the true output to the next time step regardless of what the model predicts at the current time step. During training this example uses teacher-forcing. Notice that Transformer is an autoregressive model, it makes predictions one part at a time and uses its output so far to decide what to do next. Build tf.data.Dataset with the tokenized sentences.Filter out sentences that contain more than MAX_LENGTH tokens.Tokenize each sentence and add START_TOKEN and END_TOKEN to indicate the start and end of each sentence.Build tokenizer (map text to ID and ID to text) with TensorFlow Datasets SubwordTextEncoder.Preprocess each sentence by removing special characters in each sentence.Extract a list of conversation pairs from move_conversations.txt and movie_lines.txt.

L897 +++$+++ u5 +++$+++ m0 +++$+++ KAT +++$+++ He was, like, a total babe Samples of conversation text from movie_lines.txt We are going to build the input pipeline with the following steps: U0 +++$+++ u2 +++$+++ m0 +++$+++ Samples of conversations pairs from movie_conversations.txt movie_lines.txt has the following format: ID of the conversation line, ID of the character who uttered this phase, ID of the movie, name of the character and the text of the line. The character and movie information can be found in movie_characters_metadata.txt and movie_titles_metadata.txt respectively. Movie_conversations.txt has the following format: ID of the first character, ID of the second character, ID of the movie that this conversation occurred, and a list of line IDs. “+++$+++” is being used as a field separator in all the files within the corpus dataset.

If you are interested in knowing more about Transformer, check out The Annotated Transformer and Illustrated Transformer.ĭatasetWe are using the Cornell Movie-Dialogs Corpus as our dataset, which contains more than 220k conversational exchanges between more than 10k pairs of movie characters. If the input does have a temporal/spatial relationship, like text, some positional encoding must be added or the model will effectively see a bag of words.

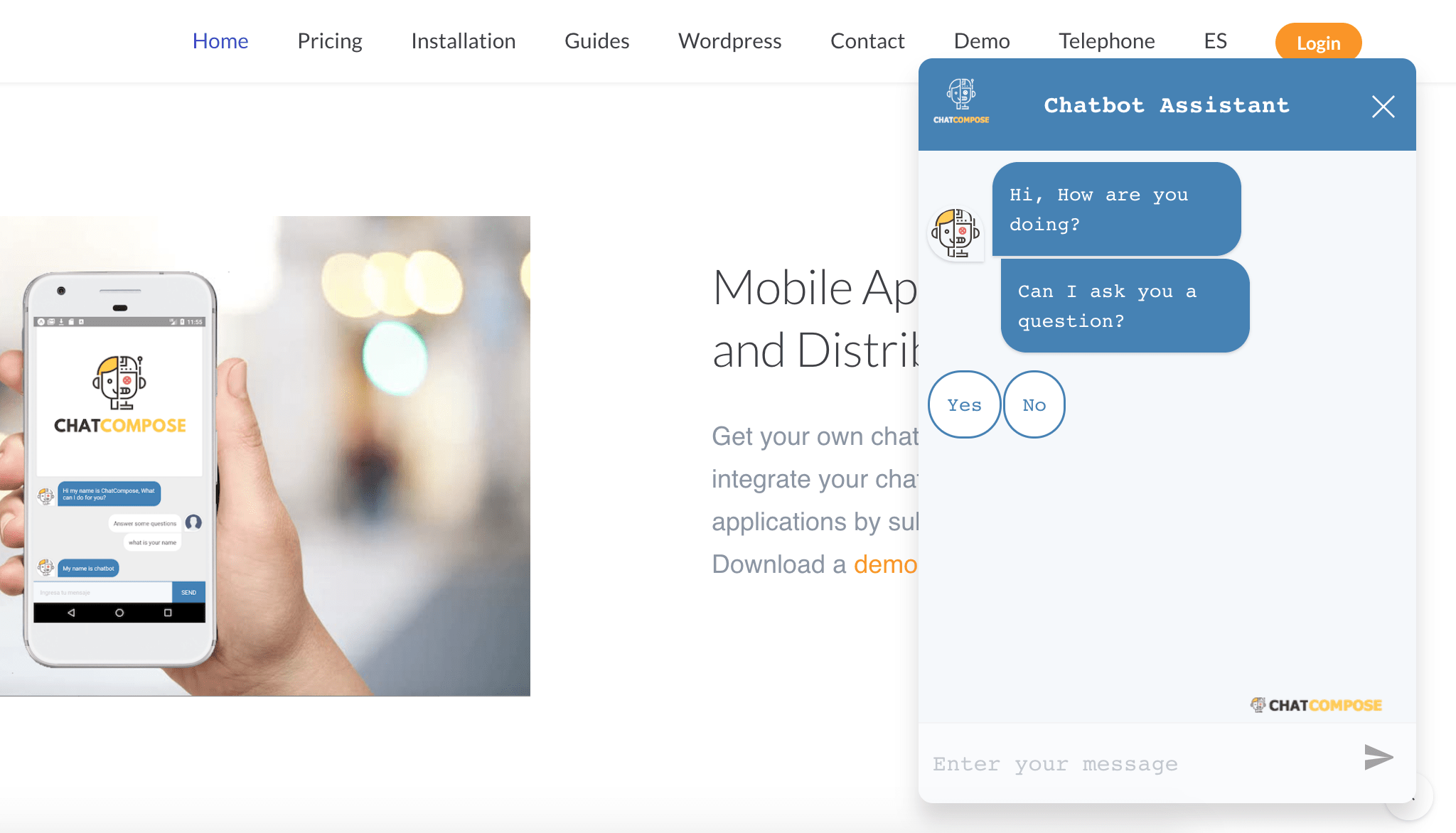

For a time-series, the output for a time-step is calculated from the entire history instead of only the inputs and current hidden-state.Distant items can affect each other’s output without passing through many recurrent steps, or convolution layers.Layer outputs can be calculated in parallel, instead of a series like an RNN.This is ideal for processing a set of objects. It makes no assumptions about the temporal/spatial relationships across the data.This general architecture has a number of advantages: A Transformer model handles variable-sized input using stacks of self-attention layers instead of RNNs or CNNs. TransformerTransformer, proposed in the paper Attention is All You Need, is a neural network architecture solely based on self-attention mechanism and is very parallelizable. Sample conversations of a Transformer chatbot trained on Movie-Dialogs Corpus. Input: i am not crazy, my mother had me tested. Implementing a Transformer with Functional API.Implementing MultiHeadAttention with Model subclassing.Preprocessing the Cornell Movie-Dialogs Corpus using TensorFlow Datasets and creating an input pipeline using tf.data.In this tutorial we are going to focus on: This article assumes some knowledge of text generation, attention and transformer. All of the code used in this post is available in this colab notebook, which will run end to end (including installing TensorFlow 2.0). In this post, we will demonstrate how to build a Transformer chatbot. With all the changes and improvements made in TensorFlow 2.0 we can build complicated models with ease. The use of artificial neural networks to create chatbots is increasingly popular nowadays, however, teaching a computer to have natural conversations is very difficult and often requires large and complicated language models.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed